The New Era of Vocal Presence

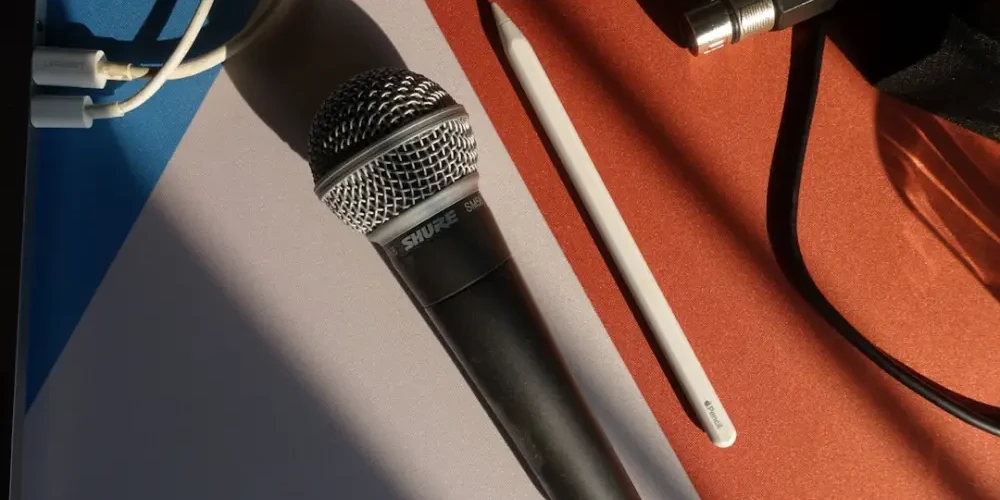

Have you noticed? The voices in your favorite podcasts sound crisper, more intimate, and more consistent than they did just a few years ago. While high-end microphones and sound-treated rooms at studios like Calypso Room still play a vital role, there is a new silent partner in the booth: Artificial Intelligence. AI is no longer just about generating robotic text-to-speech; it is fundamentally changing the way we record, edit, and perceive the human voice in modern sound production.

For podcasters and audio creators, this shift is more than a technical curiosity—it is a practical revolution. AI is helping creators bridge the gap between a home-recorded demo and a studio-quality production, making professional sound more accessible than ever before. In this guide, we will explore how these technologies are being used today and how you can practically integrate them into your own creative audio process.

Beyond the Robot: The Rise of Natural AI Enhancement

In the early days of digital audio, noise reduction often left voices sounding “underwater” or metallic. Today, AI-driven neural networks have learned to distinguish between the complex frequencies of human speech and the unwanted hum of an air conditioner or the hiss of a budget preamp. Instead of simply cutting frequencies, these tools reconstruct the voice, filling in the gaps to create a fuller, more resonant sonic texture.

Cleaning Up the Clutter with AI Restoration

One of the most practical applications of AI in the studio is restoration. Tools like Adobe Podcast or iZotope RX use machine learning to analyze a recording and strip away reverberation and background noise. For the modern podcaster, this means that an interview recorded in a noisy cafe can, with the right processing, sound like it was captured in a controlled studio environment. This allows for greater flexibility in where and how stories are told without sacrificing the professional quality listeners expect.

The Magic of Voice Leveling and Tone Matching

Consistent volume is the hallmark of a professional podcast. Traditionally, this required hours of manual compression and gain riding. AI now automates this by analyzing the entire track to ensure the speaker’s energy remains consistent. More impressively, AI can now match the “tone” of different microphones. If you record an intro on a high-end studio mic and an interview on a portable device, AI can help blend the two so the transition is seamless for the listener.

Practical Strategies for Integrating AI Into Your Workflow

Adopting AI doesn’t mean letting a machine take over your creative vision. Instead, it’s about using these tools to handle the repetitive, technical tasks so you can focus on the art of storytelling. Here is a practical approach to bringing AI into your production routine:

- Start with AI-Driven Denoisers: Before you even begin EQing, run your raw audio through a light AI noise reduction pass. This creates a clean canvas for further creative mixing.

- Use AI for “Punch-ins” and Corrections: Modern tools allow you to fix a misspoken word by simply typing the correction. The AI generates the word in your own voice, saving you from having to set up the mic for a single-sentence re-record.

- Leverage Transcription-Based Editing: Platforms like Descript allow you to edit audio by deleting text. This uses AI to stitch the remaining audio together naturally, significantly speeding up the rough-cut phase of production.

- Automate the Master: While custom mastering is ideal, AI mastering services can provide a quick, balanced output for daily content or social media clips, ensuring your loudness levels meet industry standards.

Preserving the Human Element and Sonic Identity

As we embrace these advanced tools, a common concern arises: will we lose the “human” touch? The key is to use AI as an enhancement, not a replacement. A voice is more than just data; it carries emotion, breath, and personality. At Calypso Room, we believe that the goal of modern sound production is to build a sonic identity that feels authentic.

When using AI voice enhancements, it is important to maintain the natural dynamics of speech. Over-processing can lead to the “uncanny valley” of audio, where a voice sounds too perfect to be real. To avoid this, always A/B test your AI-processed audio against the original. If the AI is stripping away the subtle breaths and pauses that convey emotion, dial back the intensity. The best AI work is the kind that the listener never notices.

The Future of Listening

The way we hear voices is becoming more personalized. We are moving toward a future where AI might adjust the frequency response of a podcast in real-time to match the listener’s specific hearing profile or the environment they are in. For creators, this means the focus will shift even more toward the quality of the performance and the narrative structure, trusting that technology will handle the technical delivery.

Whether you are building a sonic identity from scratch or looking to polish a seasoned show, AI offers a suite of tools that make professional audio more attainable. By understanding the practical applications of these technologies—from noise restoration to text-based editing—you can ensure your voice is heard exactly the way you intended: clear, resonant, and undeniably human.

The evolution of sound production is an ongoing journey. As these tools continue to mature, the line between silence and symphony becomes thinner, allowing every creator the chance to produce something truly world-class.

Related Posts

Why moving to an XLR microphone actually changes your sound

Stop settling for thin USB audio. Learn…